Introduction

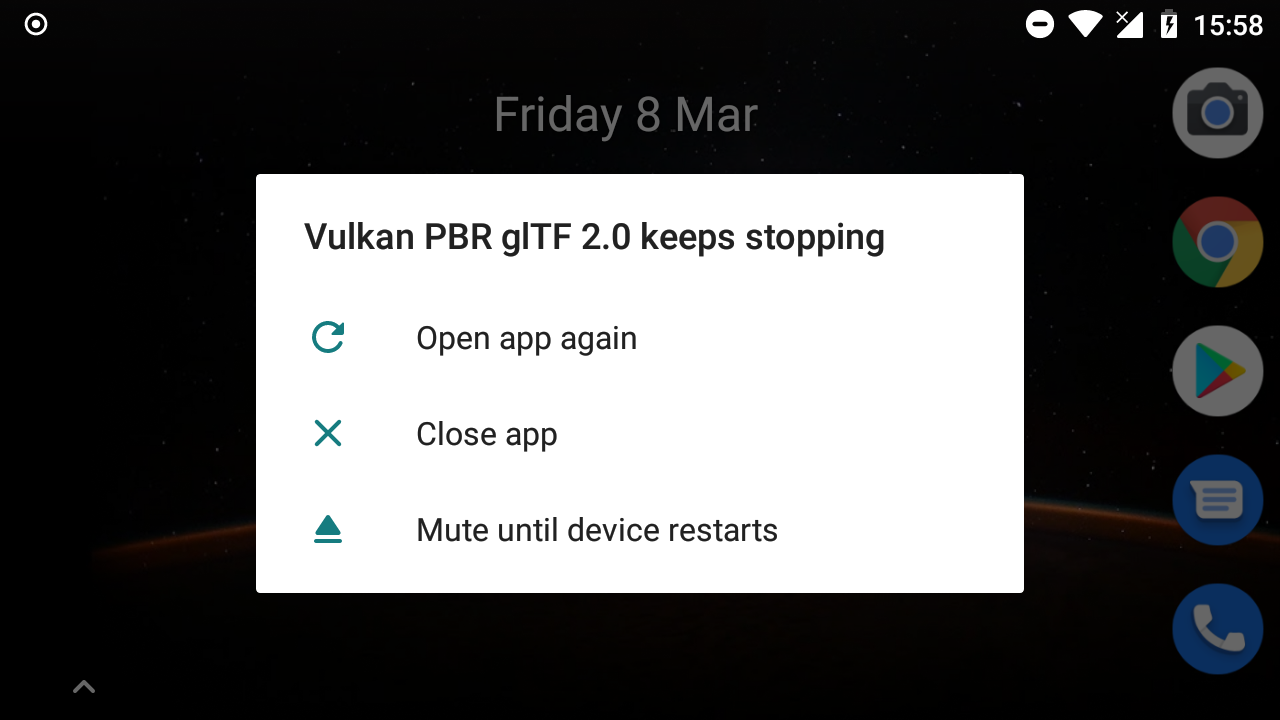

Ever since starting to work on my C++ Vulkan glTF PBR application, I was bugged by the fact that it just wouldn’t work on my everyday phone, just crashing at a certain point with an error code that actually should never be thrown by that certain function. After a few unsuccessful attempts at finding the cause for this, I finally found the root of the problem and was actually able to get this up and running.

As this was pretty different from your usual “just look at validation” or “use a graphics debugger” I decided to do a small write-up on this.

On Vulkan devices (or why you would want a low-end device to test your stuff)

If you’re developing with Vulkan and target all (or most) platforms that it’s supported on, it’s always a good idea to test your stuff on a plethora of devices with different hardware capabilities. While it’s nice to develop on high-end hardware only, that’s often just a small fraction of your target audience. So for testing my Vulkan stuff, I own a plethora of different devices for different platforms. Starting with multiple high-end desktop GPUs from different vendors, to Laptops with integrated chipsets (hats off to the MESA team for their awesome work btw.) over to different Android devices, including recent mobiles from Samsung and a Nvidia Shield TV device (which is pretty much the same hardware you’d find in Nintendo’s Switch console).

And then there’s my personal mobile. For personal use, I prefer small and cheap devices due to several reasons. So some time ago I decided to import my first Phone from China, a Xiaomi Redmi 4X (Vulkan device report). It’s a sub 100€ device with an Adreno 505 GPU. Judging by this table, it seems that this pretty much the lowest spec GPU you can run Vulkan on, with just 48 ALUs and less than 50 GFLOPS.

Not only is the GPU as low-end as possible, but the drivers aren’t that good either, and on mobile you rarely get updated Vulkan drivers unless you’re on some of the big Vendors like Samsung. First thing I did though, was replace the modded Android with LineageOS, which seemed to have never drivers that enabled a few additional features and extensions.

So as for testing, this is pretty much the “perfect” device for low-end tests, with a weak GPU and bad drivers.

The problem

On the Redmi 4X, the demo would always crash trying to generate the irradiance or prefiltered cube map. Both are calculated from the environment cube map using different shaders, all in a single submissions that looks like this:

Begin command buffer

Change image layout for all cubemap layers (faces) and levels (mip levels) to transfer destination

For each mip level

For each face

Begin render pass

Render new face with irradiance or prefilter shader to a separate framebuffer/attachment

Copy from that attachment to the current mip level of the current cube face

End render pass

Change image layout for all cubemap layers (faces) and levels (mip levels) to shader read

Submit command buffer

So the demo would submit two big command buffers to the graphics queue, with each submission generating the whole irradiance or prefiltered cube map including all mip levels.

While this works fine on all other devices I tested, even low-spec desktop IGPs, it would always crash before displaying anything:

Running the app through Android Studio’s debugger shows that the crash always happens upon queue submission (line 17) on the Redmi 4X:

void flushCommandBuffer(VkCommandBuffer commandBuffer, VkQueue queue, bool free = true)

{

VK_CHECK_RESULT(vkEndCommandBuffer(commandBuffer));

VkSubmitInfo submitInfo{};

submitInfo.sType = VK_STRUCTURE_TYPE_SUBMIT_INFO;

submitInfo.commandBufferCount = 1;

submitInfo.pCommandBuffers = &commandBuffer;

// Create fence to ensure that the command buffer has finished executing

VkFenceCreateInfo fenceInfo{};

fenceInfo.sType = VK_STRUCTURE_TYPE_FENCE_CREATE_INFO;

VkFence fence;

VK_CHECK_RESULT(vkCreateFence(logicalDevice, &fenceInfo, nullptr, &fence));

// Submit to the queue

VK_CHECK_RESULT(vkQueueSubmit(queue, 1, &submitInfo, fence)); <--- Result is VK_ERROR_INITIALIZATION_FAILED

// Wait for the fence to signal that command buffer has finished executing

VK_CHECK_RESULT(vkWaitForFences(logicalDevice, 1, &fence, VK_TRUE, 100000000000));

vkDestroyFence(logicalDevice, fence, nullptr);

if (free) {

vkFreeCommandBuffers(logicalDevice, commandPool, 1, &commandBuffer);

}

}Going back through the call stack, this submission is triggered by the above mentioned cube map generation function.

Finding the root of the problem

As this crash is reproducible and always caused by the same piece of code, it’s now to time find out why this happens.

Using tools (no luck)

Validation layers won’t output anything, so in terms of api usage the code is fine. While this is great in general, no validation layer messages usually means that the bug isn’t easy to catch and often caused by the device or the driver.

But those of you that are familiar with Vulkan might already have spotted something odd. The result for vkQueueSubmit is VK_ERROR_INITIALIZATION_FAILED . Looking at the man page for vkQueueSubmit this is no valid return code, and that’s often a hint for a bad or faulty driver. So that error code won’t help us too.

Next step now is to put the app through a debugger. The Adreno GPUs (as part of the Snapdragon SOCs) are made by Qualcomm, and they offer a profiler for their SOCs. But sadly this didn’t help either, as it didn’t seem to trace anything before the first presentation, so again no step closer to finding the root of the crash.

Manually getting there

So now that none of the usual debugging tools did help shed light on the root of the problem it’s time to resort to the last and often best debugging tool: the programmer’s brain, combined with at least some amount of graphics programming experience.

We somehow need to find out why a queue submission could fail. We can rule out logical errors or wrong api usage thanks to a clean validation, using the debugger all objects are properly initialized, barriers are fine, etc.

But when running the application it’s obvious that loading assets and generating textures (before the crash occurs) takes much longer than on all other devices, so that VK_ERROR_INITIALIZATION_FAILED may actually hint at an operation that takes to long to finish, so the driver times out or hits some memory limitation. If that’s the case, then it actually should return something like VK_ERROR_DEVICE_LOST.

But that sounds like a possibility, so next step is trimming down the time for that cube map generation to see if that’s really the problem. That’s actually pretty easy by just reducing the size of the cube maps we want to generate from the environment cube map loaded from file:

void generateCubemaps()

{

...

switch (target) {

case IRRADIANCE:

format = VK_FORMAT_R32G32B32A32_SFLOAT;

dim = 16; // was 64

break;

case PREFILTEREDENV:

format = VK_FORMAT_R16G16B16A16_SFLOAT;

dim = 16; // was 512

break;

};

And running this with greatly reduced target cube map actually makes the sample render something!

So the crash really seems to be caused by the cube map generation taking so long that the driver runs into a time out or hits some memory limitations!

Obviously this can’t be the solution though, as that resolution is far to small and even slightly increasing it would crash again.

But now that we know that the cause for the crash is the actual weight of the submission, we can start trying to fix this.

Fixing the crash

With the above conclusion we can now finally work out a solution for this crash. As this seems to be caused by the submission being to heavy for the GPU to lift, we just need to split it up into several small submissions.

So instead of the single submission mentioned above, I reworked the cube map generation to use multiple granular submissions so the GPU won’t time out:

Begin command buffer

Change image layout for all cubemap layers (faces) and levels (mip levels) to transfer destination

Submit command buffer

For each mip level

For each face

Begin command buffer

Begin render pass

Render new face with irradiance or prefilter shader to a separate framebuffer/attachment

Copy from that attachment to the current mip level of the current cube face

End render pass

Submit command buffer

Begin command buffer

Change image layout for all cubemap layers (faces) and levels (mip levels) to shader read

Submit command buffer

With these changes, submissions are much lighter, giving the GPU some room to “breathe” between these and avoiding the risk of running into some driver time outs.

And yes, running the demo with these changes actually makes it work on the low-specced Redmi 4X:

And yes, you read right. It’s running at a full 4 frames per seconds. Enabling animation makes it drop down even lower ;)

While that’s not really interactive, dropping resolution or displaying less complex models makes this run with at least half-way interactive frame rates even on that low-spec GPU.